Not necessarily. Statistics from the Google dashboard and social media insights show measures and not absolute counts, which can prove to be inaccurate.

Reading Between the Numbers: What Platform Data Really Shows

Huge user counts and millions of interactions, this illusion of great big numbers attracts more users to a platform. According to numbers, big mishaps can be transformed into small hiccups, all in an instant.

Furthermore, if those numbers are deeply explored, they tell a really haunting story, so many cases hidden between numbers, the numbers that made us feel secure now, scaring us all the same.

This article explores the topic of how platforms display conflicting data to boost their ratings, but how the real situation is always hidden behind the scenes.

Key Takeaways

- Illusion of big numbers

- Moderation data vs Exposure risk

- When transparency meets litigation

- What tech-savvy users should look for

The Illusion of Big Numbers

Big platforms love big figures. They signal dominance, legitimacy, and cultural gravity. And they can make problems look smaller than they are.

A company can say harmful content is 0.01 percent of activity. Sounds microscopic. But if the platform has tens of millions of daily users, that sliver can still represent a steady stream of incidents. Percentages shrink the emotional weight. Absolute numbers bring it back.

Growth makes this harder. User bases can balloon faster than moderation teams. New features roll out before safety controls mature. Engagement rises while oversight struggles to keep up. In most reporting, growth reads like momentum. In practice, it also expands the surface area for risk.

Even enforcement stats can mislead. “We banned X accounts” sounds decisive, but it hides the timeline. How long were those accounts active? How many people did they contact? How many reports were filed before action happened? Takedowns measure response, not protection.

This is where scrutiny shows up, especially around youth-heavy platforms like Roblox. Questions about moderation capacity, age controls, and response time have fueled public criticism and legal pressure. When trust-and-safety metrics can’t account for repeated failures at scale, legal action over Roblox safety failures becomes a predictable consequence rather than a surprise.

Numbers carry authority. Without context, they can also carry cover.

Moderation Data vs. Exposure Risk

Transparency reports often emphasize volume: messages filtered, accounts banned, content removed, and automated tools improving detection. It looks like control.

The missing metric is exposure time.

If harmful content stays up for hours, the harm doesn’t politely wait for a takedown. Screenshots spread. DMs escalate. Predators pivot to private channels. By the time enforcement appears in a report, the damage can already be baked in.

Response speed matters as much as response volume. A platform might tout high removal rates “within 24 hours.” For adults, that sounds reasonable. For minors in real-time environments, 24 hours is enough time for a situation to turn serious.

Broader research helps frame how constant online life has become for teens. Pew Research Center’s Teens, Social Media and Technology 2024 report points to how frequently teens are online and the kinds of experiences they encounter there. When so many kids are connected so often, moderation gaps don’t need to be massive to matter.

Reporting friction is another quiet factor. How easy is it for a child to flag something? How many taps? Does the report disappear into a void? Are parents alerted in a practical way, or only if someone knows to look? High removal numbers can reflect relentless user reporting, not a system that prevents harm from reaching kids in the first place.

Moderation reacts. Safety anticipates. Good reporting should make that distinction easy to see.

When Transparency Meets Litigation

Public transparency lives in curated spaces: dashboards, quarterly updates, polished language. The company controls the framing.

Legal scrutiny changes the rules.

When lawsuits arise, internal logs, escalation protocols, staffing ratios, and moderation timelines can enter discovery. The story stops being about what’s summarized and starts being about what actually happened, when it happened, and what was known internally.

That’s where mismatches become costly. If public messaging suggests robust safeguards while internal documentation shows consistent delays or understaffing, the contrast carries weight. If features introduced new risk pathways and warnings were ignored, timing becomes part of the record. Numbers that once felt tidy get dragged back into context.

Litigation also collapses the comfort of aggregation. Reports speak in totals. Courtrooms deal in individual harm. One case can shatter the soothing effect of a low percentage, because the percentage doesn’t sit in therapy afterward.

When transparency becomes evidence, precision and consistency start to matter more than spin.

Fun Fact : According to sources, there are over 44 zettabytes of total data in the entire digital universe

What Tech-Savvy Users Should Look For

Most people skim platform reports for the headline stats. If you’re comfortable with data, the real signals are in the details.

Check ratios first: moderators per million users, and whether safety staffing is growing as fast as the user base. If oversight lags, risk spreads quietly.

Then look at timing. How fast are reports reviewed? How long does harmful content stay up? A removal count means little if it happens after the damage is done.

Scrutinize age controls. A birthdate field isn’t verification. Stronger systems add friction that makes impersonation harder.

Finally, read the language like an editor. “Ongoing improvements” and “continued investment” are easy to say. Better reporting includes definitions, baselines, trendlines, and outside validation.

The Broader Pattern: Data Without Context

Safety reporting isn’t the only place where numbers can mislead. Tech is packed with metrics that sound definitive while dodging the context. Uptime figures that ignore outage length. Security claims with no supporting details. Success rates that quietly depend on perfect conditions.

A 99 percent recovery figure sounds definitive until you ask what scenarios were included. Corruption? Encryption? Physical damage? Without methodology, the number floats.

Platform safety stats work the same way. Percentages need raw totals. Improvements need baselines. “Actions taken” needs timelines. Otherwise, interpretation turns into guesswork.

This pattern shows up across digital products, including how data analytics shaping modern software systems influences decisions, risk scoring, and the stories companies tell about performance. Analytics can clarify reality, or blur it, depending on what’s measured and what’s quietly ignored.

Independent audits and third-party validation help because they reduce the incentive to massage the picture. When standards are clear and replicable, confidence rises. When they aren’t, dashboards become theater.

Frequently Asked Questions

Are platform-reported numbers always accurate?

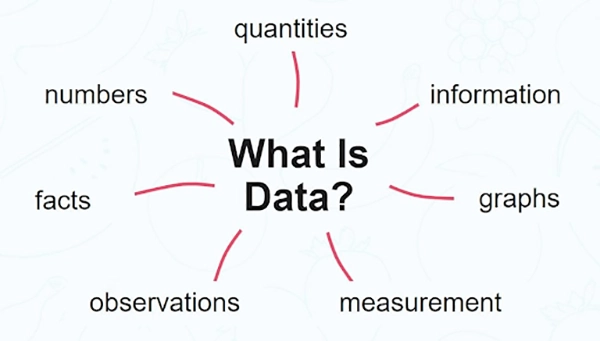

What is the most important thing while investigating real data?

Context is the most important factor while investigating and finding out real data, as it lets us know how and in which conditions the ” real ” results were achieved.

What does “outlier” mean?

The data points that differ a lot from usual observations. It could be an indicator of an internal data error or a completely unique result obtained with the same conditions.

How can I know if a platform’s data is transparent or not?

If a platform provides all the statistics of their given values with the required context, then the platform’s data is most likely transparent and real.

Making a presentation doesn’t just mean designing slides. It’s about defining a clear structure, using defined logic, useful visuals, and…

Businesses with poor queue management see return customer rates of 62%, while those with excellent queue management see rates of…

Competitor research without traffic data is guesswork. You can guess who your rivals are, guess how big they are, guess…

The performance of digital business is directly related to website speed, uptime, and scalability. Slow page performance has a negative…

“People work for money but go the extra mile for recognition, praise, and rewards.” — Dale Carnegie (Writer & Teacher)…

Almost every company that depends on data runs into the same problem: although they can find the data they need,…

Financial data supports every part of a business, directly affecting cash flow, payroll, tax reports, audits, customer billing, and daily…

“Cybersecurity is much more than a matter of IT.” — Stephane Nappo (Cybersecurity Professional) For manufacturers working within the defense…

Learning has transformed in the modern age with the integration of new technologies to help students and professionals prosper in…